When building neural networks (NN) in trading the most important thing you need to consider is how you will structure your inputs and outputs to get meaningful and successful generalizations and, therefore, predictions. Most of the attempts you will find within the research literature dealing with the development of NNs for financial time series use a typical linear input method where you give your neural network something like the closes of the past 10 weeks and you expect to predict the close for tomorrow. However when we think about how the neural network must have all relevant information to make a trading decision it becomes clear that this method has some serious disadvantages. Within this post I will talk about these disadvantages as well as a new and revolutionary method – which I found in the literature – to make the neural network “see” things much more like you and me do! The images showed in this post were taken from this article.

First of all let us see what is wrong with the linear input methods that we usually use to build NNs to predict financial time series. The neural network sees a string of values for some input but it doesn’t have anything that tells it the relationship between the values you give it. For example the neural network may know that the closes 1.2, 1.3 and 1.4 lead to the close 1.1 but it doesn’t know that 1.2 came first, then 1.3 and then 1.4 because when you give inputs in this way there is no established relationship between the values. The problem with this is that the NN finds it very hard to make generalizations because the data may appear random. What happens in this case is that you tend to curve-fit to values in training and out of sample results tend to fail quite dramatically.

–

–

If we want the NN to give us outputs that are meaningful we may need to give it information that is equivalent to the information we use as traders to derive our trading decisions. What you are doing by giving the NN a string of close values (or another similar input) is alike trying to trade by reading a tape with values, trying to figure out the connections. The NN has some problems doing this as the information that you are giving it is incomplete. Giving it all the information in the form of OHLC won’t help either since this would be equivalent to reading a tape of OHLC values (would this help you out?). In essence the price levels on the chart are quite irrelevant (the actual values of a candle’s OHLC) the only thing that matters is actually the geometrical patterns formed by price on the charts.

buy Lurasidone over the counter The key my friends, is the chart. I would say that the vast majority of technical traders make decisions based almost purely on visual information, it doesn’t matter what the values of the OHLC of the past 100 candles on the screen are, what matters is the way in which these values “paint a picture” on the screen. It would be irrelevant if these values were what they are or if they were multiplied by 10, the actual result of the analysis regarding whether to go long, short or avoid trading would still be the same. This means that the information we have been attempting to feed an NN is extremely sub-optimal, what the network needs is not the OHLC data (or something derived from it), what the NN needs is the actual picture.

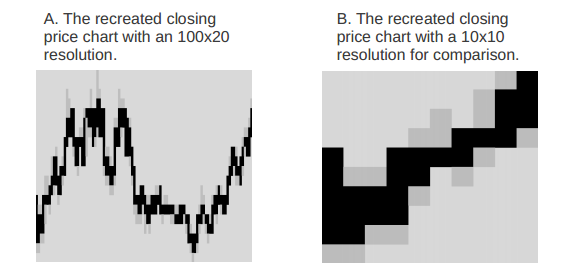

But how do you make the NN “see” the picture? Well, you literally train it with the picture. You can take an image of a chart and by using image processing techniques you can make the NN see a canvas of grey, black and white pixels that will allow it to draw clear connections between the geometrical patterns on the chart and buy/sell/neutral predictions. You train the NN with an image of a resolution high enough to depict the geometrical patterns and you use a single output neuron as a trading decision. The idea is that with this training the neural network will be able to evolve a clear view of what type of geometry leads to what prediction. For example the network may realise that certain shapes on the chart imply that a long is likely to success while others imply a reversal. The best way to use this is probably to train the network on a lower time frame image than the time span you want to predict, using the – for example – the 1H chart to make decisions regarding direction for the next 24 hours.

–

–

The best thing is that someone already tested this and published an article with the results (you can read it here). The results show that the use of an evolutionary neural network model that looks at pictures of charts instead of actual OHLC or indicator data does have a much more powerful capability to generalize and make profits than the traditional neural network approaches. This is extremely interesting and points to the way in which we might be able to create new NN strategies. This also opens up the way to other possibilities, what happens if you show the NN renko charts or fixed volume charts? What happens if you show it multiple time frames ? if you show it multiple currencies ? The sky is the limit to the way in which this can be explored.

Of course this endeavour is quite complex as it requires careful synchronization of image saving and fast image processing capabilities, coupled with code to process the images and finally train the NN. However once the first implementation is made it should be easy to come up with a lot of trading solutions. Next year one of my goals will be to develop an NN strategy using image recognition so that we can use it to increase our understanding within the Asirikuy community. Hopefully this will add a lot of power to our current NN trading arsenal, it will be very interesting to see how this image processing approach tackles the multiple-instrument problem.

If you would like to learn more about our work and how you too can learn more about neural networks in Forex trading please consider joining Asirikuy.com, a website filled with educational videos, trading systems, development and a sound, honest and transparent approach towards automated trading in general . I hope you enjoyed this article ! :o)

How is that much different from preprocessed price data, normalized to 0..20. Summary of quoted article doesn’t look extra promising.

Hi pips.maker,

Thank you for your post :o) The difference is that by processing an image there is an implicit relationship in the data which is not possible to get when you do a direct feed of price data (regardless of how you process or normalize it). As the article highlights the main advancement is in the achievement of an implicit geometric association in the inputs. The biggest thing here is that the inputs have a relationship to each other that the neural network can read directly, something you can never achieve with a traditional processing of time series data.

You should bear in mind that the article – as many in the financial literature – is just exploring a new concept and providing some fundamental proof, however this – in no way – gives you an idea of what the technique can achieve. In essence the article uncovers a very new and promising way to study neural network input relationships, worth a coding try :o) I would encourage you to read the whole article to get a better glimpse at why this is such a relevant achievement. Thanks again for posting!

Best regards,

Daniel

I think you are wrong with this one:

“It would be irrelevant if these values were what they are or if they were multiplied by 10, the actual result of the analysis regarding whether to go long, short or avoid trading would still be the same.”

Most of the systems look for crossing some limit and here you loose this information and equally treat noise and strong signals.

Hi pips.maker,

Thank you for your post :o) I think that you misunderstood what I say. What I wanted to say is that the information drawn from the charts is independent of the absolute values you have. For example if you multiplied all the values on your chart by 10 you would still be able to trade the same system only that the absolute value of your cross would change (or it may remain the same, for example an RSI would give you the exact same values because it’s a normalized oscillator). What I wanted to make clear is that the truly important thing is the relationship between the values, not the values themselves.

You’re right in that I am making no distinction between noise and signals when feeding the Neural network the “complete picture” and this is in fact what I want the NN to do (to be able to distinguish noise from true trading information from the chart). Just as a trader does when looking at a trading chart, the NN should – through the training – find some patterns within the chaos that it can use to make profit. The important thing is that the picture – unlike the OHLC information – has an implicit geometrical association that the NN should be able to use. Obviously I am not saying that this is going to be the greatest thing in the world, I am just saying that it will provide some interesting insight and will probably improve NN outcomes, we will need to experiment to see if this is in fact the case. I hope this helps you understand my point :o)

Best Regards,

Daniel

How do you train such kind of NN? You need select case by case visually from history and then feed the NN?

Hi Fernando,

Thank you for your post :o) Actually it’s far simpler than that. Suppose you want to predict the next day’s direction (next day is bullish go long, next day is bearish go short). In this case you train the EA with the 1H chart for the past few days (lesser time frame) as an input and you use the next day’s directionality as the output. You are just saying to the NN: “after you see something like this go long/short”. After the NN trains on the charts it should be able to give you some prediction of the next day’s directionality based simply on the picture of the 1H chart of the past few days. You train for 100-300 examples as you do a regular NN using the timeseries, you do not hand-pick the examples, you let the NN figure the relationships out.

In practice this is – hopefully – not going to be that hard :o) You simply need to create an image of the price chart using a charting library and then use an image processing library to reduce the resolution so that the NN can process it (if it is too large then it will simply require too many neurons). Using F4 we should be able to do this without too much hassle :o) This will be on my todo after game theory and WFA which will be released when I come back from my honeymoon. I hope this answers your question :o)

Best Regards,

Daniel

Hi Daniel,

it`s an interesting idea – recognizing shapes with NN. I`m just curious, what do you think about real implementation of this process in code

to, as you said, “NN can process it”? I mean, firstly, i don`t sure that it can be implemented in MT4 code – process is too complex. Second – as input data net receives a real file with image (or object with image in it) with binary data, so, in common – it`s also a bunch of numbers. And it`s a denormalized bunch.

Anyway, it`s my future task to implement in Nalice, if existing process wouldn`t be positive.

Also : nice blog, man.

Asirikuy.com is also very interesting, and i have proposal : maybe you should try myfxbook.com to get detailed widgets? It`s totally free and I like it very much ))

Hi admin,

I would like to read original article which you mentioned bellow last picture. You wrote: “The best thing is that someone already tested this and published an article with the results (you can read it here).” But it’t not link. Could you provide a link to this article please?

Best Regards,

Kryptox

Hi Kryptox,

Thank you for your post :o) sure, you can read the article here: http://arxiv.org/abs/1111.5892 ,

Best Regards,

Daniel