As you may have read within some of my previous posts one of our most important experiments at Asirikuy involves the creation of a strategy tester module for the F4 framework in order to be able to run Walk Forward Analysis (WFA) using the MT4 platform (or any other platform for which we have a front-end coded). During the past month this strategy tester has become a reality, enabling us to run complex walk forward analysis in order to increase our understanding about this evaluation technique and how our different trading tactics behave when evaluated through it. On today’s post I am going to cover some of my first results and observations as well as some of the areas where I believe we can improve our current WFA implementation.

The F4 strategy tester module allows to do some pretty nifty things. When running a back-test on the MT4 platform the strategy tester and WFA module allow us to run an optimization using the past X days after every trade and then automatically change the parameters for the next trade so that they represent the “best result” from the optimization period. The WFA procedure – as it is currently implemented – runs the optimization after every trade and evaluates the best result according to the highest profit during the optimization run. Additionally the ranges and steps for the different parameters used in the optimization can be determined through the structure of a simple MT4 back-testing set file. The result is that you have a back-test where your parameters are constantly being rotated, a system for which there is a constant first degree adaptation based on an optimization carried out within X periods in the past. It is also clear to note that the above code has been implemented so that changes to improve it can be added without any difficult work, adding more WFA selection algorithms for the “best result” in each optimization as well as implementing a window that allows updating after X days (and not on every trade) is within what can be done to further explore the characteristics of the WFA technique.

–

–

An added advantage of the above F4 implementation is that the C++ back-testing code is quite fast, making the optimizations last much less than what they would last in MT4. To give you an example, the strategy tester can run through about 5000 parameter sets for a one year period in less than a minute while the MT4 platform can take at least half an hour to carry out the exact same optimization. The gain in optimization speed could be from 2-30x depending on the complexity of the strategy being evaluated. The biggest gains in speed are made for strategies for which most of the lag in testing comes from MT4 procedures while the lowest gains are seen for strategies that have heavy calculation times (like NN strategies).

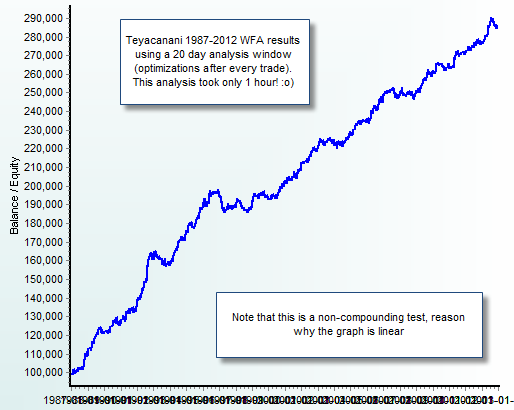

In order to test this new WFA module I first decided to start with the simplest system we have at Asirikuy (from a parameter space perspective) which is clearly Teyacanani. The results of the test – a 25 year WFA non-compounding run – showed that the system can give profitable results when using optimization windows from anywhere from 20 to 200 days. Statistics were inferior in some aspects to the regular 9 year rank stable results we usually use but in some ways the statistics were superior (such as maximum draw down period length and lower Ulcer Index). Another interesting thing I noticed was that the system failed to pass the WFA when I included the trailing stop and time exit based criteria used by this system into the analysis (meaning that on the profitable WFA these were disabled), meaning that the optimum windows are probably very different with such a criteria.

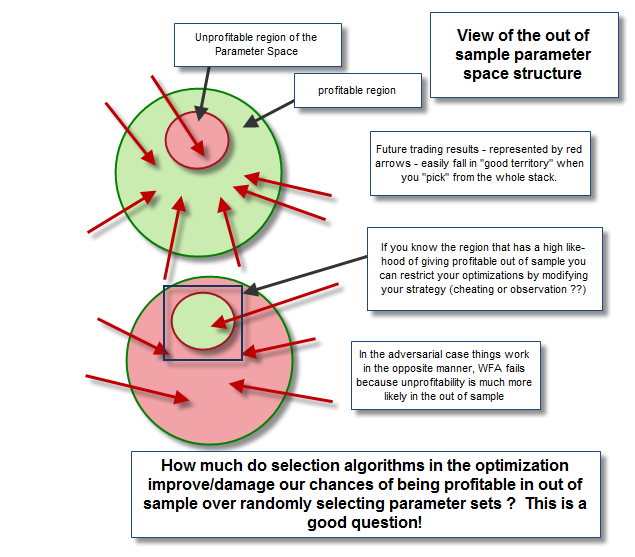

After running experiments with some other strategies with slightly larger parameter spaces – and failing to find a profitable and acceptable WFA from 20 to 200 days – it seems to me that strategies with larger parameter spaces (consequently more degrees of freedom) need much larger windows in order to be able to adapt in a way that does not degenerate in curve fitting. Strategies that are able to adapt to small windows do so because the inefficiencies they trade are rather ever-present during historical testing (as I have discussed on previous posts) implying that profitability in the parameter space is rather “flat”. You can think about this in terms of randomness as well, the probability to land on a profitable parameter set by chance increases when the parameter space is smaller and the strategy has little freedom while on big parameter spaces the situation changes as the probability to land on unprofitable sets increases to much higher values. You can also illustrate this effect by taking a strategy with a small parameter set and putting it on a symbol for which it doesn’t work well – the probability to have an unfavourable parameter set is larger – and you will also see that the WFA also fails to give any good results when using small windows.

How big should windows be then ? My best guess is that the window needs to be big enough as to contain enough information to ensure that no curve fitting takes place and the optimization selection criteria – perhaps one of the most important aspects here – also needs to evaluate the stability of a parameter in the parameter space rather than its net profitability. In the end we would be expecting to have windows that would be from a few years to 5-10 years in size for systems with more complex parameter spaces in order to avoid curve fitting on smaller windows. The big problem we have when this happens is that the computational cost of evaluating the parameter space isn’t trivial and the data available for most brokers – as you need to do the WFA from data of your live broker (you cannot load external data from another source because it will damage the analysis as you might adapt to characteristics outside of your broker) – won’t go back more than a few years. In this sense using WFA for any system that needs more than 2 year windows isn’t practical because this simply cannot be realized under live trading conditions (at least on most MT4 brokers).

–

–

Is there a solution to the problem? Well there are two ways in which we can attempt to solve this problem. The first is to try to trick systems with large parameter spaces by doing “sampling” instead of total parameter space evaluations, meaning that we can try to use a genetic optimization technique instead of a pure “brute force” optimizer in order to find the “best result” on the parameter space (According to whichever criteria we want to optimize). If we code a genetic optimizer to optimize for rank stability we may find a way outside of this problem. Obviously this introduces the additional issue that every back-test would give results that would be different in some degree because genetic optimizations do not guarantee the finding of a global maximum. This is something you would have to live with for any system with a large parameter space as it is fairly difficult to evaluate the potentially billions of combinations that may arise (and it is not worth it as a very large percentage is bound to be unprofitable). Directed optimizations are the best bet here.

The second problem – the optimization window size – cannot be solved entirely as it depends on the characteristics of the system. The only solution to this would be to simplify systems (by “freezing parameters” or by simplifying logic if possible) such that you would only optimize a region of the parameter space that is able to give you profitable results using a certain optimization window size (less than 2 years) on the 25 year WFA test. Problems are both theoretical and technical and solving them will be a significant milestone in our quest for more robust system development. It is also worth reminding everyone that WFA is merely a first degree adaptive technique and it is by no means a holy grail. As far as I have been able to experiment WFA is successful when the parameter space is largely profitable and it fails when degrees of freedom that contain large numbers of unprofitable parameters are introduced. What does this mean for system development, window sizes and robustness ? Right now I am still giving it some thought.

If you would like to learn more about algorithmic trading systems and how you too can learn to design and program your own strategies please consider joining Asirikuy.com, a website filled with educational videos, trading systems, development and a sound, honest and transparent approach towards automated trading in general . I hope you enjoyed this article ! :o)

Interesting topic.

How do you detect the death of a WFA strategy?

I guess Montecarlo analysis should be treated differently since the parameter sets are changing constantly.

Hi Scalptastic,

Thank you for your post :o) There is no reason to treat the Monte Carlo analysis differently because you can evaluate the results of the WFA procedure as the results of any other strategy. When the statistical characteristics of the WFA procedure go beyond what is expected from it (beyond MC worst cases) you can have a high confidence that something is wrong and stop trading. The MC analysis only cares about there being a distribution of returns, the underlying technique used to generate the distribution is what the MC analysis evaluates (it doesn’t matter if the underlying technique is plain or a first or second degree adaptive technique). I hope this helps :o)

Best Regards,

Daniel

Interesting find Daniel, glad the WFA tests have begun! My belief is that a strategy that fails a WFA is a strategy you need to stay away from

If possible please make a video or write a short tutorial on how the WFA module exactly works… I’m a bit confused on how it is implemented in F4 and how to use it correctly…

Hi Franco,

Thank you for your post :o) The problem is to define what “working in WFA” actually means because this all depends on the window sizes. It is also quite contradictory to my personal trading experience to reject strategies that do not work on WFA on small windows since we have cases for several systems that do not have positive WFA results in windows of less than 400 days (about 2 trading years) that have worked as expected on live accounts for 2-3 years (take Atinalla FE as an example). It seems to me that failing to work under a first order adaptive regime only shows that the system needs to be traded in another way due to the composition of its parameter space but it doesn’t seem to point to a “guaranteed failure” as some things like trade chain dependency do cause.

So long story short, I would say that it is a good thing to have, but not fundamental if you have long term rank stable result as a base. The live results prove that this is the case (because there are at least several systems that behave within their historical characteristics and yet are not profitable in WFA). Obviously this talks about my point of view but you can clearly choose whichever methodology and requirement you deem most appropriate. Thanks again for posting,

Best regards,

Daniel

PS: Video about that coming up this week-end :o)

hi Daniel,

I have had some exposure to WFA before (disclaimer: my research was a bit shallow at the time); and read few articles about it; and concluded at the time; that the best regression; is the linear regression.

the danger of WFA is entering the polynomial world; which is full of curve fitting dangers.

I would think that a mix of the two (linear vs polynomial) in testing WFA. for example; instead of re-optimizing after every trade; we should optimize once a month; such as the beginning of a calendar month; or calendar week.

This way; you get the benefits of both.

Would be good if you can try this method; and compare it to every trade optimization.

Hi Daniel,

your 25 year backtest seems to deliver a performance of 3 times the initial balance (non-compounding) with estimated 10% DD. I would not say that this result justifies all the technical efforts WFA implies – too low performance, too high risk. Turning on compounding will increase DD while only improving moderately the return.

Best regards,

Fd

Hi Fd,

Thank you for your post :o) This reduction in performance is to be expected because the selection criteria for the WFA right now is too simplistic (just best profit) usually much better results are achieved when better selection algorithms (such as those involving rank analysis) are implemented for the optimization phase. Saying that it’s not worth the effort due to these first results is perhaps too premature. Thanks again for posting!

Best Regards,

Daniel

It appears that the optimum size of the WFA to contain all possible conditions is the whole data set. You wrote:

“How big should windows be then ? My best guess is that the window needs to be big enough as to contain enough information to ensure that no curve fitting takes place…”

But isn’t curve-fitting what the WFA does by definition? When you find the best parameters for a data window to maximize some metric then you are curve-fitting with respect to that metric.

I appreciate your effort and you are doing a lot of good work but WFA is an old concept that in my opinion does exactly the opposite of that trading system developers should be doing, multiple times, i.e. it optimizes multiple times. I think this should not be confused as adaptation because that is a very different function.

It is clear to me that WFA will always produce fitted system based on the selection of a proper metric and using things like genetic programming to find the best parameters just increases election bias like it is explained in this blog http://tinyurl.com/bwxgc73

Nice work Daniel. I think your work is unique in the MT community. At least, according my searches.

IMHO, WFA is the only way to evaluate correctly the adaptability of any setup to the market variability. When you optimize the window before and apply the parameters on the next window, you can evaluate how the setup is responding to these different market conditions (IS x OOS). So, after guarantee that the IS size contains all degrees of freedom the setup needs and the OOS generates a significant number of trades, the developer should look for a variety of different combinations of IS x OOS market conditions to test their setup´s behavior.

The objective function is another important factor. It must reflect what kind of result the trader wants to achieve with his/her setup. This is relevant when we use an optimization process that searches for local / general maximums, because the results that not fit to this function will be discarded during the search. As an example, if you search for max net profit only, the optimization result could present a very high trade drawdown.

Personally I use WFA to find a setup that shows stability and profitability in almost OOS windows. It means a smooth equity curve (and it is very difficult). After that I get all the OOS windows to apply Monte Carlo to analyze the profit and drawdown distributions, define initial equity and other. Very close to Bandy methods, with some changes.

The problem is that every asset has a different behavior. In my analyses, even if different assets have high correlations, the effective result of a setup could lead to different results. So, it takes a lot of time to test a setup. And much, much more to find a tradable one.

Regards,

Marcelo