One of the most robust ways of evaluating if a strategy is still behaving according to its historical distribution of returns is to perform Monte Carlo simulations that allow you to find out the trading scenarios where your strategy is behaving in a manner which is outside of its predicted statistical behaviour. In a Monte Carlo simulation you run thousands of trading scenarios that align with the same distribution of returns obtained from long term back-tests and from these trading scenarios you can analyse the worst result; deriving a worst-case scenario that allows you to stop your strategy within a specific confidence interval. For example I may find from a 100K iteration Monte Carlo simulation – using the 15 year back-testing results of my strategy – that the probability of my system going through a 20% drawdown within the next 10 years is 1 in 100K if the system statistics remain intact. This means that if I reach a 20% drawdown within the next 10 years I can discard the strategy with a 99.999% confidence level that it’s statistical characteristics are now different.

However this use of the Monte Carlo simulation is very simple and it is limited in the sense that you are only stopping strategies along one of the many possible worst case scenarios. But what do I mean by “many” worst case scenarios? Well, the scenario I described above talks about a convergent Monte Carlo simulation, where we are looking at the absolutely worst case that can arrive as the number of trades becomes large. This means that no matter how many additional trades we have, the probability of facing a deeper drawdown if the statistics of the system remain constant is close to zero. This is because the simulation already goes beyond the point of guaranteed profitability, a trade number beyond which the system with a positive expectancy will always generate a positive return with a confidence beyond 99.999%. Generating a deeper drawdown than that predicted by a 100K iteration worst case beyond this number of trades becomes extremely hard as this is actually the limit of the trading strategy.

–

–

What are the other worst cases ? The above scenario allows traders to make a very broad assumption, which is that all drawdowns are created equal. If a trader commits to the convergent Monte Carlo simulation worst case, they are effectively saying that they will wait for this worst case before stopping the strategy, an absolute proof that the strategy’s long term edge has failed. But can we find proof that the system has failed before we reach the convergent worst case ? The answer is yes and this is something that will allow you to stop a strategy way before it has to reach the much deeper level predicted within the convergent case. The trick here is that we must understand that not all drawdowns are created equal and a smaller drawdown period can constitute a worst case provided that it happens within certain conditions. This means that if your convergent worst case is a 30% drawdown, you might be able to say with a 99.999% that your system has failed at a 15% drawdown, provided that this drawdown happens within some predefined conditions.

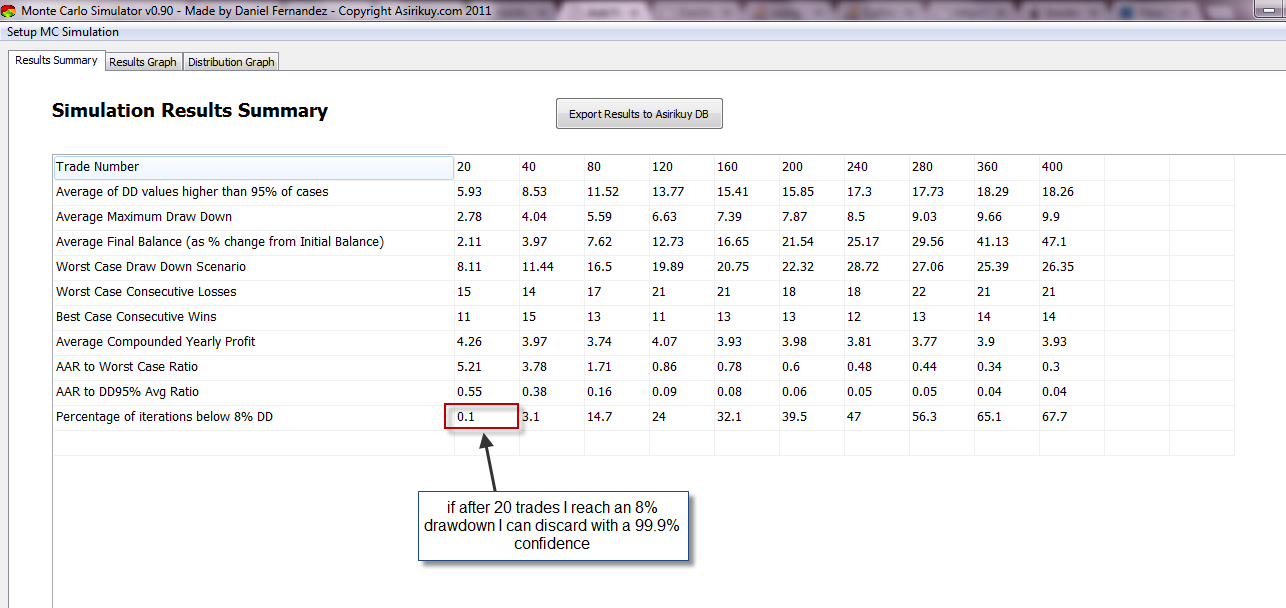

How is this so? They key is in the number of trades that it takes you to reach a certain drawdown level. When you carry out a Monte Carlo simulation across a variety of trade numbers that are smaller than the convergent case, you can find out the level of confidence with which you can discard a system after only a few dozen trades. The above image shows you an example of a system where I have carried out simulations across a wide variety of trade numbers and you can see that the Monte Carlo worst cases are different for each case. It is also important to look at the number of iterations below the target drawdown figure that you want to use for discarding (above it’s been calculated 8%) as this will tell you how confident you can be about discarding your strategy. This is extremely important because a 2 trade MC simulation would tell you that the worst case across 100K simulations is 2 consecutive loses but this doesn’t mean that the system should be discarded because the probability to achieve this result across the 100K iterations is probably around 50% (meaning that this is just a highly probable behaviour across this trade number).

The analysis for the sample strategy showed above allows me to make predictions for the 20 trade case that show me when the strategy can be discarded. For example if I reach a drawdown of 8% after 20 trades I know that I can discard the strategy with a confidence of 99.9% because the probability that my system would reach a 8% drawdown so quickly are close to zero (0.1%). However note that as the number of trades goes up the probability to reach a 9% drawdown also increases, meaning that if this drawdown is reached after 80 trades I can no longer make this decision because the probability that I am making a wrong call is much higher. In this case I wouldn’t want to wait till the convergent worst case (around 26-30%) if I can discard my strategy after an only 8% drawdown that happens under the right circumstances. If for my distribution of returns the probability to reach an 8% drawdown after 20 trades already allows me to discard a strategy with a 99.9% confidence, there is no reason to wait for the wider worst case.

–

–

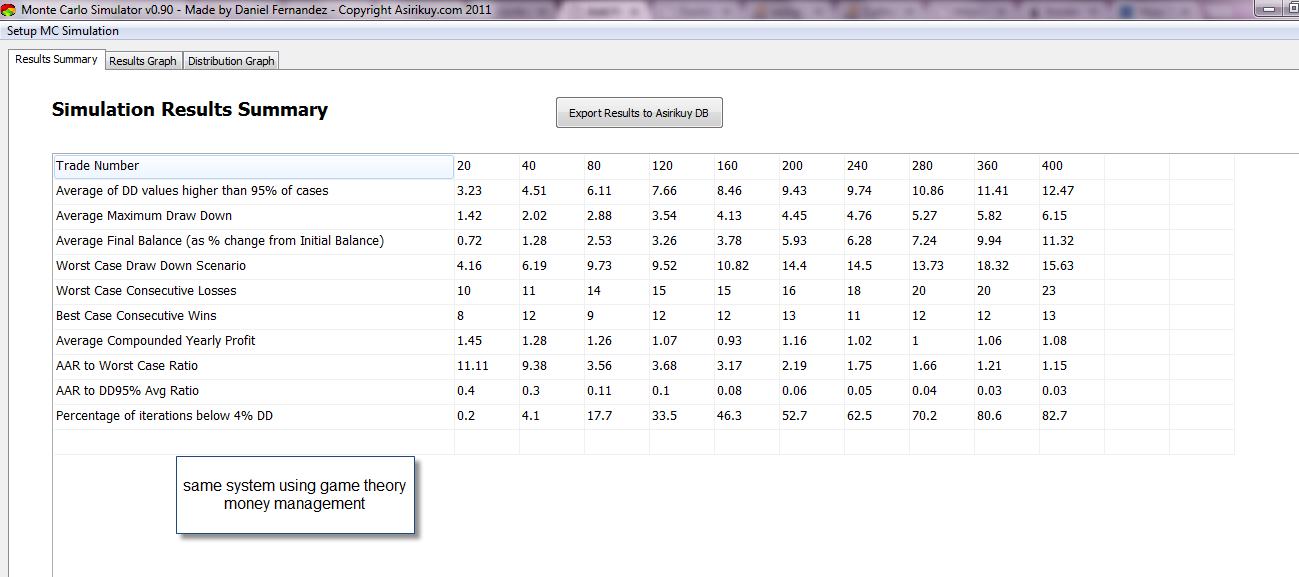

In summary I want to show you that you can better manage your trading systems if you not only pay attention to drawdown depths but also to the amount of trades it takes to reach a certain drawdown level. If you run Monte Carlo simulations for different trade numbers you can come up with worst case scenarios that will allow you to discard strategies when they “nose dive” into drawdown levels that would be close to impossible if their long term distribution of returns was to be preserved. I also want to call some attention to the results of the same system with a game theory money management module (second image), which shows how advanced money management affect the strategies overall results. When using game theory we could discard with smaller worst case scenarios, especially in the short term (20-40 trades).

The above analysis features – as well as several other enhancements – are currently being implemented on the Asirikuy Monte Carlo simulation which Asirikuy members will be able to use soon. If you would like to learn more about Monte Carlo simualtions and system analysis please consider joining Asirikuy.com, a website filled with educational videos, trading systems, development and a sound, honest and transparent approach towards automated trading in general . I hope you enjoyed this article ! :o)

Good article!

you concluded that “When you carry out a Monte Carlo simulation across a variety of trade numbers that are smaller than the convergent case, you can find out the level of confidence with which you can discard a system after only a few dozen trades. ”

But if it is not convergent the numbers will change each time you do it right?

Hi Joe,

Thank you for your comment :o) Yes! This is precisely why it is so useful. Below the convergent trade number you can discard a system at drawdown values below the convergent worst case because the probability of reaching that drawdown across the specified trade number is extremely low. I hope this answers your question,

Best regards,

Daniel

Thanks but not quite. My take is that if the MC simulation is not convergent than the results for all entries on the tables you show for small trade numbers will be different and often vary widely and you cannot reach any conclusions for them.

Hi Joe,

Thank you for your post :o) The MC simulation is convergent in the sense that for that number of trades you always achieve the same value (so you can in fact make conclusions). When I say that it is not convergent I mean that if you increase the number of trades the drawdown values increase, meaning that you haven’t reached the convergent worst case (regarding trade number). However you can make conclusions because for each trade number results are always the same (no matter if you repeat the simulation once or 100 times). I hope this helps :o)

Best Regards,

Daniel

Thanks. Are you saying that your MC simulation involves no random sampling of the equity curve? A random sampling to get 10 trades will not converge to same values for 100 and 10,000 trials AFAIK. Maybe you mean a different thing by MC simulation. Do you have an article that explains in detail what you mean by MC simulation and how you do that? In my book MC simulation is sampling of the equity curve with replacement to estimate the distribution of some statistic. In this case the statistic is the DD. It must have a distribution. The distribution for 100 samples of the equity curve will be different when compared to that of 10,000 samples even for the same number of trades from a larger trade sample.

Hi Joe,

In the MC simulations I use the distribution of returns taken from a long term back-test and I then generate 100,000 trials with X number of trades on each trial using the per trade profit/loss probabilities derived from this distribution. I use 100,000 trials because this ensures that the results of the MC simulation will be convergent regarding the results of the simulation (meaning that adding more trials won’t change the results). The tables above shows the results for different trade numbers.

As you can see the results – regarding worst case DD – starts to converge when the number of trades reaches a certain number (meaning that running a simulation with more trades doesn’t change the result), this is what I am calling a “convergent” MC simulation regarding trade number, meaning that the resulting statistics do not vary when you increase the number of trades on a simulation. However – as I explained above – my results on simulations with low number of trades do not change, because all simulations are convergent regarding their individual results (meaning that introducing more trials doesn’t change the result significantly).

So the convergence of the drawdown as a function of the trade number per simulation shouldn’t be confused with the convergence of each individual simulation as a function of the number of trials (which I always guarantee on each run). You can search the blog for MC simulations for more posts about how I carry this analysis :o) I hope this helps,

Best Regards,

Daniel

Thanks for the detailed response. However, I see several assumptions (potential problems) with your approach, some of fundamental nature. (1)Your sampling assumes equal probability of all samples without replacement. If this is not true you must weigh each result by its probability. You cannot take the average if all samples do not have the same probability. (2) It assumes no serial correlation (3) It assumes trades do not overlap. (4) The sampling introduces bias and error that must be measured. (5) This is the most important one: the sampling ignores the role of neutral position returns. In my opinion your MC simulation applies only to a tiny subset of systems where the above assumptions are not severe.

Hi Joe,

Thank you for your reply :o) Well I would certainly want to improve the simulation technique I’m using but let me explain a few things:

1. Resampling is obviously done with replacement. All samples have the same probability to be selected (why do you think this would not be the case?). How do you suggest the method can be improved in this regard ?

2. This is true, there is no serial correlation. However the inclusion of serial correlations for our particular systems are very small because our particular systems lack significant serial correlations in the long term. I agree that this leads to inaccurate results for systems for which there is a strong serial correlation (basket systems, systems with pyramiding, etc).

3. This is also true, in our systems there is no trade overlap.

4. Yes this is measured comparing to the initial distribution of returns. Do you have any preferred methods to measure this ?

5. I do not understand this very well. What do you mean by neutral position returns ?

You’re right in that the MC technique we use is not universal and leads to problems when it is used in trading systems that violate some of the assumptions we make (such as a lack of strong serial correlations, multiple open positions, etc). Again, any suggestions you make for improvements will be most welcome. Thanks again for writing :o)

Best Regards,

Daniel

Thanks My comments to your answer:

“All samples have the same probability to be selected (why do you think this would not be the case?). ”

I wasn’t referring to the probability of an algorithm selecting the samples but of a trading system generating them. This is a basic problem MC simulators make.

“This is true, there is no serial correlation.”

This has to be shown for each case and not only assumed.

” This is also true, in our systems there is no trade overlap.”

Again, most systems overlap trades and because they do not generate them due to restrictions in the code that does not mean trades do not overlap. Think of long when SMA(5)> SMA(30). After the first trade there are also trades at every bar until the relationship reverses but backtesters only take the first signal.

“Do you have any preferred methods to measure this ?” [sampling error]

I wouldn’t know where to start:)

” I do not understand this very well. What do you mean by neutral position returns ?”

I mean that some small change in the starting bar of the backtest can affect the position returns and the drawdown values in significant ways.

Hi Joe,

Thank you for your comments :o) Oh, ok, your answer clears things up a lot more! Let me answer again:

Yes, this is true.

At Asirikuy systems are coded without any trade chain dependency. This means that system signals are NOT dependent on the starting point. The systems act on all signals but new signals do not generate new trades but simply update the SL/TP values of the running trade. This means that results do NOT depend significantly on your startup point and you are acting on all existing trade signals (the system never ignores any signals). Signals that concatenate (a single trade that is composed of many update signals) generates a single result whose outcome is taken into account into the distribution of returns, therefore the MC simulation completely accounts for this concatenation of signals. However there is never trade overlap (there is only one position open at any given time) and no signals are ever ignored (all signals generate either new positions -if no positions are opened – or an update of the system exit SL/TP values).

Asirikuy systems – as I mentioned above – are coded to completely avoid this. Changing the starting point doesn’t change system statistics in any significant way.

So, please consider that Asirikuy systems are a specific group of strategies coded around some key principles that have served to guide our development of MC simulations. You’re right in that this should be clearer on future posts as obviously my conclusions here do not apply to “all systems” but merely to those we have developed. I hope this clears things up :o)

Best Regards,

Daniel

Thanks. Still you avoided to answer the first point in that when you sample the trades in the MC you assume equal probability of these samples. As I said although you are sampling with equal probability this is NOT equivalent to the samples having equal probability of occurring in the market. Before you can average statistics you must prove that ALL these samples have the same probability of occurring in the market. Unless this is true, the fundamental assumption of MC is violated and the results may be worthless.

Just found a scientific paper about forecasting maximum DD:

http://papers.ssrn.com/sol3/papers.cfm?abstract_id=2049584

The author also investigated how the max. exp. dd changes depending on the level of serial correlation.