Several days ago I wrote an interesting post dealing with trade frequency and what characteristic was desirable amongst traded systems. After a ton of discussions on the comments of that post providing arguments in favor of the things I had said it seemed evident that the point I wanted to make did not come across clearly. I want to dedicate today’s post to further clarification on this issue of trading frequency, what my opinion is in this regard and what evidence I have to support my ideas. I will go into the spots I think were not discussed clearly and I will give some more insight into the arguments behind them.

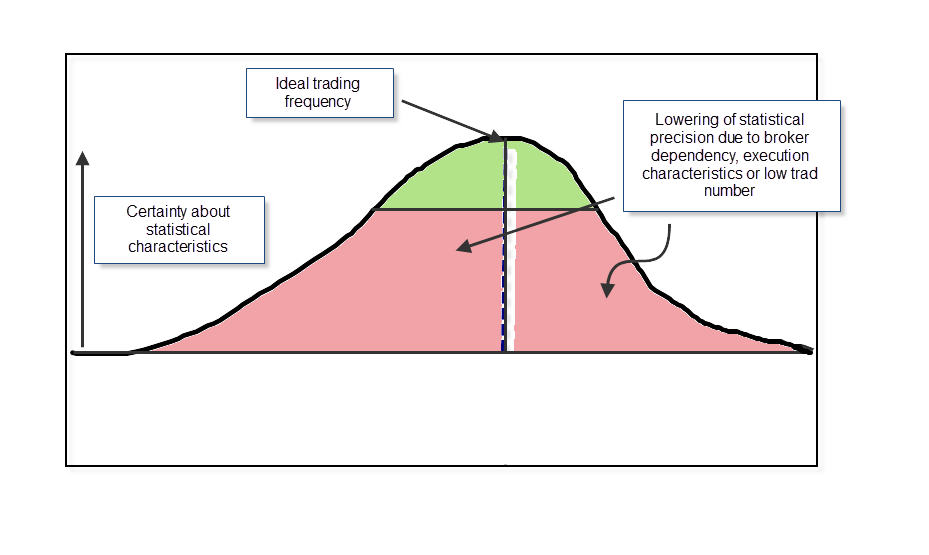

The last post talked about why I believe we should not aim for the “highest possible” trading frequency. This was somewhat taken as a defense for systems that don’t trade a lot and people interpret this as me taking a stance for systems that have very low trading frequencies. I will reformulate my opinion so that there is no room for this sort of confusion: I think that you should aim for the highest possible trading frequency until you start to face problems in simulation accuracy which make this higher trading frequency increase your uncertainty about the statistical characteristics of your strategy. Certainly it makes sense to use systems that trade more as they give more meaningful results in some aspects but the problem is that in FOREX TRADING the more trades you have the more broker dependency and execution variables become an issue.

I have conclusive evidence that prove that beyond a certain point trade frequency turns into an enemy more than a friend. I built several systems with different trading frequencies trading only on signals derived from previously closed candles across a one hour time frame. The systems were simulated for the past 2 years and achieved 200, 400, 500 and 1000 and 2000 trades respectively. I then tested these systems across three different brokers for a period long enough to achieve 20 trades on each system. What I see is that the short term statistical results vary much more for the systems with the highest trading frequency while the systems with the lower trading frequency achieve less variation. This is strong evidence that shows that broker dependency plays a more important role on systems that have higher trading frequency.

It is also a false assumption that a 5 minute system with 200 trades is as statistically relevant in trading as a system with 200 trades on the daily charts. Even though the amount of information used to derive these trades was the same on both cases (for example 200 bars) the fact is that the REAL amount of information contained within 200 daily candles is MUCH BIGGER than the amount of information contained in 200, 5 minute bars. Obviously it is way too simplistic to say that a 5 minute bar has the same relevance as a daily bar. The implicit amount of information contained is simply not equal. If you have a system that trades 200 times in one month it doesn’t give you the same statistical information as a system that traded 200 time within a ten years period, although numerically they are the same in reality the value of the 10 year information is much more significant because the system simply traded across much more market conditions (it did trade across 10 years!).

You can in fact measure this somewhat by building several systems and comparing their chances of success. I built 100 systems on a ten year period (1990-2000) and then walked them forward to evaluate their performance across the following 10 years. The systems traded then from 2000 to 2010. I then did the same exercise for a one hour system built on the equivalent time period which generated 500-700 trades, and walked them forward for its length. I also did this in a “moving window” fashion, averaging the percentage of successful systems across a 10 year period. The results show conclusively that the expected success of a system that trades the same in a higher time frame across a longer time is MUCH HIGHER than that of a lower time frame system that has the same number of trades. The probability that future behavior will be similar to past results INCREASES as the time frame is longer even if the number of trades remains the same.

Another misconception is that you can take into account slippage by introducing some average cost across your systems results. This can be proved wrong pretty easily as real world distortions cause differences in entry/exit points which eventually reverse trading outcomes. The cost caused by slippage is NOT only the cost of an additional commission but can sometimes be the success of a whole trade. This is the reason why you cannot average down slippage, because this phenomena does not merely increase the costs per trade but the differences it causes in entries and exits often cause complete inversions. The exact same thing goes for broker dependency, you will often get purely negative inversions which will decrease your system’s performance. You can run a test of this to see it for yourself, an inclusion of average additional cost will underestimate the true effects of slippage and broker dependency (as you don’t take into account the cost of reverting a certain percentage of positive outcomes).

Long story short, I am not telling you to use systems that take the smallest possible number of trades. I am telling you that as the number of trades increases your probability to fall into problems dealing with broker dependency and execution related issues is GREATER. It is therefore EXTREMELY important to be aware of this and use more advanced simulation techniques to evaluate these effects across systems that trade more. For example using Monte Carlo simulations might be a way to cover slippage and broker dependency (at least in part) but the most this will do is reveal how big the uncertainty related to your draw down and worst case characteristics is. corpulently Trade with the highest possible frequency which allows you to know your statistical outcomes with good precision.

If you would like to learn more about my work in automated trading and how you too can learn to build systems with robustness in mind please consider joining Asirikuy.com, a website filled with educational videos, trading systems, development and a sound, honest and transparent approach towards automated trading in general . I hope you enjoyed this article ! :o)

“Trade with the highest possible frequency which allows you to know your statistical outcomes with good precision.”

I like this article quite a bit, and I was wondering two things, first, is this applying to individual systems only, or does it also apply to portfolio’s of many systems as well, in whole or in part?

Also, what is the highest possible frequency that would allow you to know the statistical outcome with good precision on a ten-eleven year time frame? Did the studies that you conducted give you a frame of reference for this, maybe a range?

Awesome article, can’t wait for the answer!