I am a big fan of Oanda as a broker. They are regulated in the US, Europe and Australia, they have very limited slippage and have amazing and unique qualities such as having a Java and REST API for direct access to their trading facilities plus an incredible fractional lot sizing trading system that allows you to trade Forex down to 1 USD units (0.00001 lots). Truth be told, you won’t find the things Oanda offers anywhere else on the Forex market world (at least I haven’t — and I’ve looked hard!). However I have recently found out that Oanda has some important problems when it comes down to the high resolution historical data that you can obtain from their servers. After wanting to use this data for data-mining – with the hope to have a portion of the data match the broker we use for live trading – I found out that the data has a much worse quality than you would expect from a broker like Oanda.

–

#function to get historical 1M data from Oanda

#Copyright Daniel Fernandez

# http://mechanicalforex.com

# https://asirikuy.com

# This function requires the rfc3339 and requests libraries

# the ending data is corrected to GMT +1/+2

global OandaSymbol

OandaSymbol = {

'EURUSD': 'EUR_USD',

'GBPUSD': 'GBP_USD',

'USDJPY': 'USD_JPY',

'AUDUSD': 'AUD_USD',

'USDCHF': 'USD_CHF',

'USDCAD': 'USD_CAD',

'EURCHF': 'EUR_CHF',

'EURGBP': 'EUR_GBP',

'EURJPY': 'EUR_JPY',

'AUDJPY': 'AUD_JPY',

'AUDCAD': 'AUD_CAD',

'GBPJPY': 'GBP_JPY',

'GBPCHF': 'GBP_CHF',

'GBPAUD': 'GBP_AUD',

'GBPCAD': 'GBP_CAD',

'EURAUD': 'EUR_AUD',

'EURCAD': 'EUR_CAD'

}

def get_historical_data(symbol, endingDate="2015-01-01", tokenValue):

print "getting data for {}".format(symbol)

j = 0

while True:

if j > 0:

date = parser.parse(lastCandleTime[:10]) + datetime.timedelta(hours=10)

if date < datetime.datetime.strptime(endingDate, "%Y-%m-%d"):

break

try:

token = "Bearer %s" % (tokenValue)

server = "api-fxtrade.oanda.com"

headers = {'Authorization': token, 'Connection': 'Keep-Alive', 'Accept-Encoding': 'gzip, deflate', 'Content-type': 'application/x-www-form-urlencoded'}

global OandaSymbol

if j == 0:

url = "https://" + server + "/v1/candles?instrument=" + OandaSymbol[symbol] + "&count=5000&granularity=M1"

else:

url = "https://" + server + "/v1/candles?instrument=" + OandaSymbol[symbol] + "&count=5000&granularity=M1&end=" + date.strftime("%Y-%m-%d")

try:

req = requests.get(url, headers = headers)

resp = req.json()

except Exception:

e = Exception

print "ERROR"

quit()

candles = resp['candles']

ratesTime = []

ratesOpen = []

ratesHigh = []

ratesLow = []

ratesClose = []

ratesVolume = []

i = 0

for candle in reversed(candles):

timestamp = int(rfc3339.FromTimestamp(candle['time']))

correctedTimeStamp = timezone('UTC').localize(datetime.datetime.utcfromtimestamp(timestamp))

correctedTimeStamp = correctedTimeStamp.astimezone(timezone('Europe/Madrid'))

if (correctedTimeStamp.isoweekday() < 6):

ratesTime.append(datetime.datetime.utcfromtimestamp((int(calendar.timegm(correctedTimeStamp.timetuple())))))

ratesOpen.append(float(candle['openBid']))

ratesHigh.append(float(candle['highBid']))

ratesLow.append(float(candle['lowBid']))

ratesClose.append(float(candle['closeBid']))

ratesVolume.append(float(candle['volume']))

lastCandleTime = candle['time']

i += 1

ratesFromServer = {"1_Open": ratesOpen,

"2_High": ratesHigh,

"3_Low": ratesLow,

"4_Close": ratesClose,

"5_Volume":ratesVolume}

ratesFromServer = pd.DataFrame(ratesFromServer, index=ratesTime)

if j == 0:

finalRates = ratesFromServer

else:

finalRates = finalRates.append(ratesFromServer).sort_index()

#finalRates = finalRates.drop_duplicates(keep='last') #done!

finalRates = finalRates[~finalRates.index.duplicated(keep='first')]

print "{}, {}".format(finalRates.index[0], lastCandleTime)

j += 1

except:

break

finalRates.to_csv("OANDA_"+symbol + '_1.csv', date_format='%d/%m/%y %H:%M', header = False)

–

The reason why we wanted to use data straight from Oanda for simulations is related to the fact that some systems have a significant dependency related to the feed they are simulated on. This means that you can get a different result if you use data from broker A vs data from broker B, reason why you would want to use data from your own broker in order to come up with the best possible simulation scenario. Since Oanda has direct access API facilities that allow you to ask for historical data across any desired time interval I decided to try using data from our regular high-quality one minute OHLC historical data set from 1986 to 2005 and then use data obtained from the Oanda servers to get the reminder portion of the data (2005-2015). The hope with this would be that the data would contain 10 years of the same broker we use for live trading and therefore would be a better proxy for live trading for our machine learning and price action based systems at Asirikuy.

After I got the 1M data I then proceeded to form the 1H data candles used most commonly for our system simulations and I saw no significant problems with the data. A few hours were missing on the 1H feed but it is nothing uncommon since brokers often face maintenance tasks or similar issues that often cause a few 1H holes in the data. Through a ten year period missing 2 or 3 hours is not something that should cause significant problems within the feed. However this initial analysis would prove to be very optimistic as I looked at the detail of the 1M data thanks to the initial observations made by new Asirikuy member GeekTrader.

–

–

When GeekTrader looked at the 1M data from Oanda he noticed there were tons of holes in the 2005-2015 period, most of them spanning around 0.2-0.4 hours but some even in excess of 1H (the few I could detect on the 1H charts). Since I had access to live recorded 1M data and some historical tick data from Oanda for 2015 I was able to look at these holes and see whether they were actually present on the live feed. To my surprise the live feed did not show these holes, meaning that the Oanda servers somehow failed to properly record the 1M data from their live tick feed. Since Oanda – sadly – no longer offers their historical tick data for downloads I could not confirm this for earlier years but it was certainly true for the 2015 data. I also confirmed these holes were present on both the demo and live servers and also confirmed that the holes did not happen due to my scripts by manually downloading the data across these problematic time periods. The holes were also present when downloading data through the Java API or through MultiCharts.

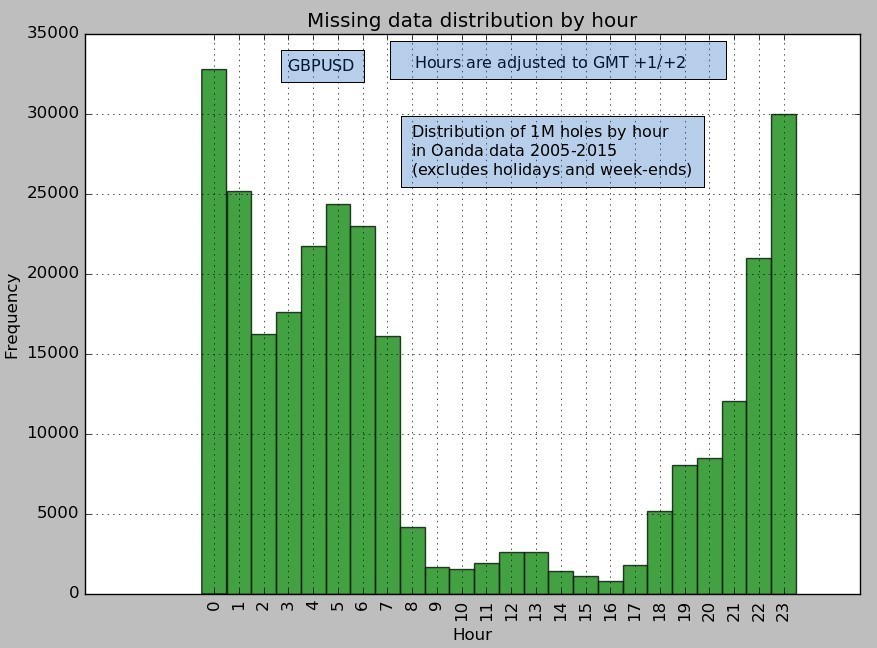

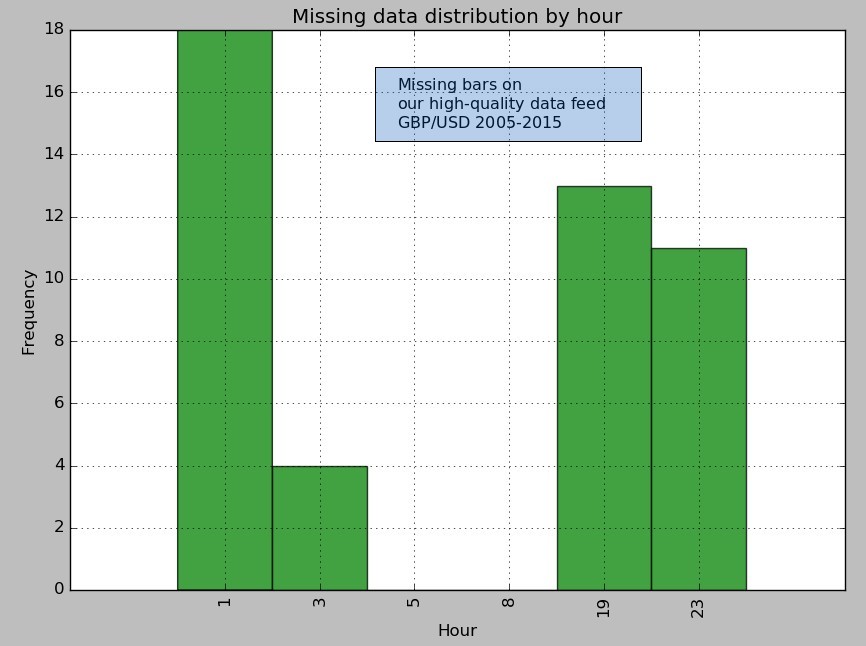

Analyzing the data feed in more detail revealed a similar distribution of missing bars for most symbols, in line with the images showed above (a representative example for the GBPUSD is shown). Most symbols have 10-14% of the expected 1M bars missing and most missing hours happen between 22 and 7 GMT +1/+2. Many of the missing hours happen across important news releases and therefore the high/low values for many candles are significantly underestimated. Also despite the fact that missing minutes are least common in the European session there are still hundreds of minutes missing at these times, something that should definitely not happen at hours that have such an important level of liquidity. In contrast you can compare this data with our high-quality data feed aggregated by a prime liquidity provider where the the total number of bars missing is less than 0.01% (46 out of 2,417,760 expected bars).

–

–

What is surprising from the above for me is the fact that this 1M data does not reflect what really happened at Oanda. At times where I have tick and regular data available the holes do not seem to appear, revealing that the problem seems to be related somehow to how Oanda has recorded their high resolution historical data. They definitely do not seem to give priority to the accuracy of their recording. Oddly there are some instances where the 1H high/low values do not match a reconstruction from the 1M (but are more in line with our high quality data), suggesting that the higher timeframe data is more accurately recorded than the high resolution data. I have now contacted Oanda and hope to get an answer from them regarding this very poor data quality obtained from their servers.

In the meantime it might be fair to say that dependency in system simulations using Oanda historical data stems from the fact that this data is simply of a much lower quality than our high quality data set. We would need to have a set with decent quality in order to perform any meaningful measurement of broker dependency. If you would like to learn more about evaluating historical data and how you too can get access to long term 1M data with almost no missing bars please consider joining Asirikuy.com, a website filled with educational videos, trading systems, development and a sound, honest and transparent approach towards automated trading.